"We'd love to implement AI, but our data just isn't ready." — Every operations leader, every time. And increasingly, the wrong answer.

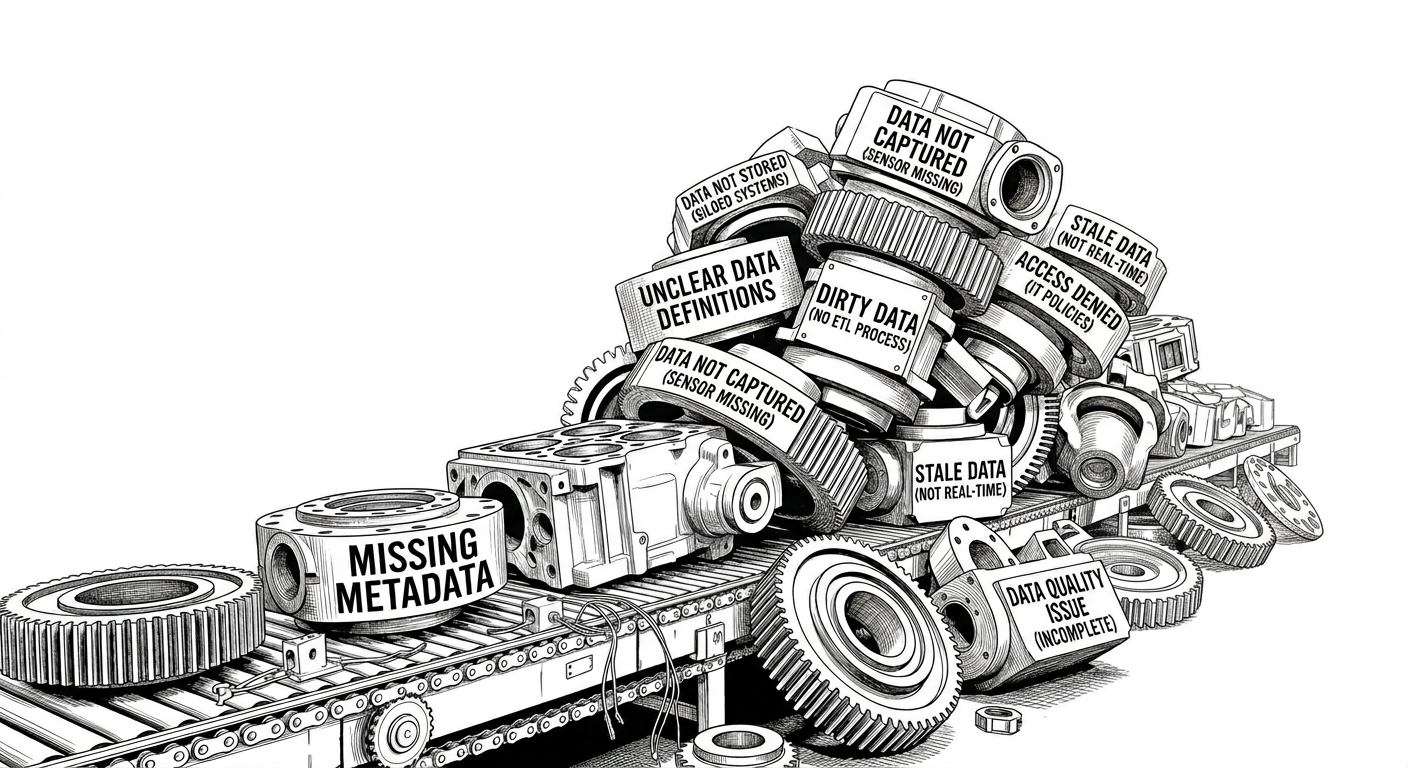

Walk into almost any industrial equipment manufacturer and ask their operations or IT leadership about AI adoption. Chances are, you'll hear some version of the same story: "We'd love to implement AI, but our data just isn't ready." They'll tell you the data isn't being captured. Or that it's being captured but not stored. Or stored but not cleaned. The blockers stack up like machine parts waiting on a backlogged assembly line, and AI remains permanently on the roadmap — never on the floor.

This belief is understandable. It is also, in the era of generative AI, increasingly wrong.

The Old Mental Model of AI

The hesitation comes from a reasonable place. The AI that most manufacturers encountered in the 2010s — linear regression models, anomaly detection pipelines, predictive maintenance dashboards — genuinely did demand clean, structured, well-labeled historical data. Feed it noise and you got noise back. That version of AI was a precision instrument that required a precision workbench.

THE OLD ASSUMPTION

Traditional AI tools required high-definition, properly structured data as a prerequisite. Without it, there was no point starting. The data pipeline had to come first, the AI second.

So manufacturers built mental frameworks — and budget cycles — around the idea that data readiness was a gate you had to pass before AI could enter. Fix the sensors. Standardize the schema. Clean the historian. Get the data scientists. Then, maybe, AI.

That framework made sense for a previous generation of tools. It does not describe what AI can do today.

What Generative AI Actually Changes

The shift is not incremental. Generative AI fundamentally reorganizes the relationship between data collection and intelligence. Where traditional AI could only consume structured data, generative AI can generate structure from unstructured inputs — and more importantly, it can automate the data collection behavior that manufacturers have been struggling to systematize for years.

This isn't a workaround. It is a fix at the root cause: the data was never missing because of infrastructure failures, it was missing because humans cannot realistically log every observation, every anomaly, every informal diagnosis made in the field. Generative AI can.

AI is no longer just an analyzer waiting at the end of the pipeline. It has become the pipeline itself — capturing, structuring, and transforming information in real time.

— TABBIRD PRODUCT PHILOSOPHY

Consider how this is already playing out in a parallel industry: enterprise sales. Sales organizations have begun using AI agents to automatically record and transcribe customer phone calls. The purpose isn't simply to analyze those recordings later — it's to create the high-definition dataset that previously didn't exist.

The AI wasn't deployed because the data was ready. The AI was deployed to make the data ready.

Traditional AI

Input requirement

Structured data only

Manually cleaned, labeled, pre-formatted

High prep costBottleneck

Structuring required first

Years of ETL, taxonomy alignment, labeling

Output

Narrow model answers

Only answers pre-defined questions

Result

Loop stays open

Field failures never systematically captured

One-way, backward-lookingGenerative AI

Input requirement

Unstructured inputs accepted

Notes, invoices, sensor logs, free text

No pre-labeling neededCapability

Structure generated on the fly

Failure modes, root causes extracted automatically

Output

Engineering-grade intelligence

Surfaces patterns manufacturers couldn't pre-define

Result

Loop closed

Collection behavior automated — continuously

The Manufacturing Parallel

The same inversion applies on the plant floor. Industrial equipment manufacturers interact with their machines, their technicians, their customers, and their service history through dozens of touch points — many of them informal, verbal, or trapped in paper work orders and tribal knowledge.

Maintenance technicians narrate their findings to no one. Service calls reveal failure modes that never get logged. Equipment behavior between scheduled inspections generates observations that live only in someone's memory.

This is not a data infrastructure problem. It is a data capture problem — and the root cause is simpler than most organizations want to admit: human beings are not reliable data loggers.

Technicians are excellent diagnosticians. They are not data entry clerks. The knowledge exists; the recording doesn't happen. Generative AI fixes that gap directly, at the source, before anything downstream can fail.

THE NEW REALITY

AI can now listen to technician voice notes, parse unstructured service reports, monitor equipment behavior, and synthesize observations across channels into structured records — automatically, without a pre-built schema, from day one.

The Four Stages — Reimagined

Manufacturers who believe they're "not ready" typically describe their situation in four sequential problems. Here's what each one looks like when AI is part of the solution rather than the reward at the end of it:

- 01Data Isn't Being Captured

AI agents — whether voice-enabled on a tablet, embedded in a service workflow, or watching a video feed — can capture observations passively. Technicians talk; the AI records, transcribes, and structures. Equipment runs; the AI monitors and logs anomalies. Capture becomes ambient rather than manual.

- 02Data Is Captured but Not Stored

Modern AI-enabled systems treat storage as an automatic step in the workflow — not a separate infrastructure project. Observations are tagged, timestamped, and routed to the appropriate system of record as a byproduct of the capture process itself.

- 03Data Is Stored but Not Cleaned

Generative AI can identify, normalize, and reconcile inconsistent records in ways that would take a data engineering team months to script. Duplicate entries, inconsistent part numbering, free-text fields with variable formats — AI handles these as natural language problems, not database problems.

- 04Data Is Clean but "Not Enough"

AI can synthesize sparse data with external sources, industry benchmarks, and domain knowledge to generate meaningful starting insights even before a full data history is established. You don't need five years of historian data to begin. You need a starting point.

AI as the Full Loop, Not the Final Step

The question manufacturers need to stop asking is "Is our data good enough to feed AI?" That question has a paralysis-inducing answer: it never feels good enough.

The right question is "Where in our workflows is valuable knowledge being lost right now — and how do we deploy AI to start capturing it?"

Those are very different questions. One produces a waiting game. The other produces a starting point.

What This Looks Like in Practice

Imagine a field service technician completing a repair on a piece of capital equipment at a customer site. Historically, the value of that interaction — the failure mode they found, the workaround they used, the customer's description of how the equipment behaved before it failed — was lost the moment the technician drove away.

At best, it made it into a terse work order. At worst, it existed only in that technician's head.

With an AI-enabled field service workflow, that same interaction becomes a structured data event. The technician's voice notes are transcribed and tagged. The customer's complaint language is captured and categorized. The repair actions are linked to the equipment's service history. Photos of the failure are analyzed and stored with contextual metadata.

By the time the technician drives away, the manufacturer has a richer record of that event than most organizations generate in a full quarterly review cycle.

The manufacturers who move first won't win because they had better data. They'll win because they used AI to build better data before their competitors thought to start.

How Tabbird Approaches This

Tabbird is built on a single conviction: manufacturers shouldn't have to solve their data problems before they can benefit from AI. The two things should happen together — AI deployed at the capture layer, generating value immediately while building the data foundation that unlocks deeper intelligence over time.

That means starting where knowledge is currently being lost — service calls, technician observations, customer-reported failures, equipment behavior between scheduled inspections — and making those invisible events visible. Not eventually. From the first deployment.

The manufacturers who move first won't win because they had better data to begin with. They'll win because they started capturing it before their competitors thought to.