"Build your data infrastructure first. Build your golden data layer first. Then you'll be ready for AI." — Your consulting partner, 2024. Also your consulting partner, 2014.

We've Heard This Story Before

Circa 2012–2018. Cloud providers and consulting firms arrived with a consistent message: your company's future depends on building a modern data architecture. Data lakes. Golden data layers. Master data management programs. Enterprise data warehouses with six-figure annual licensing.

The sell was logical and seductive: once you had clean, unified, governed data, insight and automation would follow naturally. The ROI was always described as downstream — visible only after the infrastructure was in place.

Most companies built the lake. Many filled it. Few generated the business value they were promised.

Now look at what's forming. OpenAI has signed up with Accenture. Anthropic has partnered with Cognizant. The major consulting firms are releasing Gen AI infrastructure architectures — reference designs for how enterprises should build their AI foundation. The token consumption gets built in from day one.

The pattern is not subtle. Infrastructure investment is presented as the prerequisite to transformation. The business case is always deferred — present after the foundation is complete. The consulting engagement is measured in months and scope, not outcomes.

If you were in the room during digital transformation, you recognize this architecture. Different vocabulary. Same motion.

Did the Data Lake Actually Pay Off?

WORTH ASKING

Before authorizing an AI infrastructure program, pull up the original business case for your data lake. How many of those projected ROI items were ever realized? Which ones?

This is not a rhetorical question. It deserves a genuine answer before committing to the next infrastructure wave.

The honest post-mortems on big data programs tend to reveal a consistent story. The infrastructure was delivered — often over budget and over schedule, but delivered. The golden data layer was built, certified, and handed over. Then the transformation programs that were supposed to consume it moved slowly, stalled against organizational inertia, or were deprioritized in favor of other initiatives.

The data existed. The insights didn't follow automatically. The automation didn't appear on its own. The business value turned out to require a second, third, and fourth investment that the original infrastructure program never budgeted for.

Infrastructure without a specific business problem to solve is just expensive storage with good governance documentation.

The honest question is not whether data infrastructure has value. It does. The question is whether the sequence matters — and whether starting with infrastructure, rather than with the business problem, is the right way to generate ROI in a reasonable timeframe.

For most manufacturers who went through that cycle: the answer, in retrospect, is that the sequence was backwards.

Work Backwards From What You Actually Want to Improve

There's a more productive starting point. Instead of asking what infrastructure do we need to be AI-ready?, ask a harder and more grounding set of questions:

- 01What specific operation do we want to automate?

Not "improve throughput broadly" — identify the task, the person currently doing it, and the time it consumes per week.

- 02What analysis do we wish we had but don't?

Where are your teams making decisions today based on gut feel, because the structured data doesn't exist or isn't accessible in time?

- 03What do we want to predict — and what would we do differently if we could?

Prediction without a downstream action changes nothing. Define the decision that prediction would improve before building the model.

- 04Where is the software or AI gap preventing that outcome?

Now identify what's actually missing. It might be a data connector. It might be a classification model. It might be a dashboard that surfaces a pattern already buried in your CMMS.

- 05What is the minimum viable implementation that delivers measurable impact?

Not the full vision — the next concrete step that produces a result you can measure and defend.

This sequence doesn't produce the same kind of consulting engagement. It doesn't generate the same token burn. But it has a significant advantage: it generates business value first, and builds toward a larger architecture from a foundation of validated impact rather than theoretical future ROI.

The Right Solution Might Not Be a Chatbot or Search Bar

When AI budgets get approved, there's a gravitational pull toward visible, demonstrable AI interfaces. Chatbots. AI agents. Natural language interfaces. These are intuitive to show in a demo and easy to connect to a narrative about transformation.

For industrial manufacturers, they are often not the highest-value place to start.

| Problem Type | Chatbot / AI Agent | Targeted AI Feature |

|---|---|---|

| Field failure pattern recognition | Requires structured queries; doesn't surface what you don't know to ask | Semantic clustering surfaces recurring patterns automatically — no query needed |

| Technician knowledge capture | Depends on voluntary, consistent input across a field org | Structured capture at point-of-service with AI assist is more reliable |

| PM schedule optimization | Can generate recommendations but lacks operational context integration | Direct connector to EAM/CMMS data enables automated schedule adjustment |

| Cross-account failure analysis | Can synthesize if structured data is available; adds little if it isn't | Aggregation and pattern detection across accounts is a data pipeline problem |

| Executive reporting | Variable output quality; not auditable | Consistent, automated dashboard with defined metrics and clear sourcing |

This isn't an argument against chatbots or AI agents — both have genuine use cases. It's an argument for matching the solution format to the problem rather than starting with the technology and working backwards to justify it.

TABBIRD IN PRACTICE

Closing the Loop Between Field Failures and Product Teams

Tabbird's Cluster feature does exactly this: it applies agentic clustering to field-reported claims, surfacing recurring patterns across accounts and equipment lines without requiring structured data inputs or manual tagging.

The output is actionable — root causes that product engineers can act on, prioritized by frequency and severity, built on data you have, manually analyzing today, and without a six-month data infrastructure program as a prerequisite.

Capture the Short-Term Gain. Plan for the End Goal.

This is not an argument against transformation. It's an argument for a more disciplined sequence.

The end goal — full automation of the feedback loop between field operations and product improvement — is real, achievable, and worth building toward. A field technician completes a service call; the failure data is captured automatically, classified, aggregated across the fleet, surfaced to the engineering team, and incorporated into the next design cycle.

That goal is worth planning for. It is not worth waiting for as a prerequisite to generating any value.

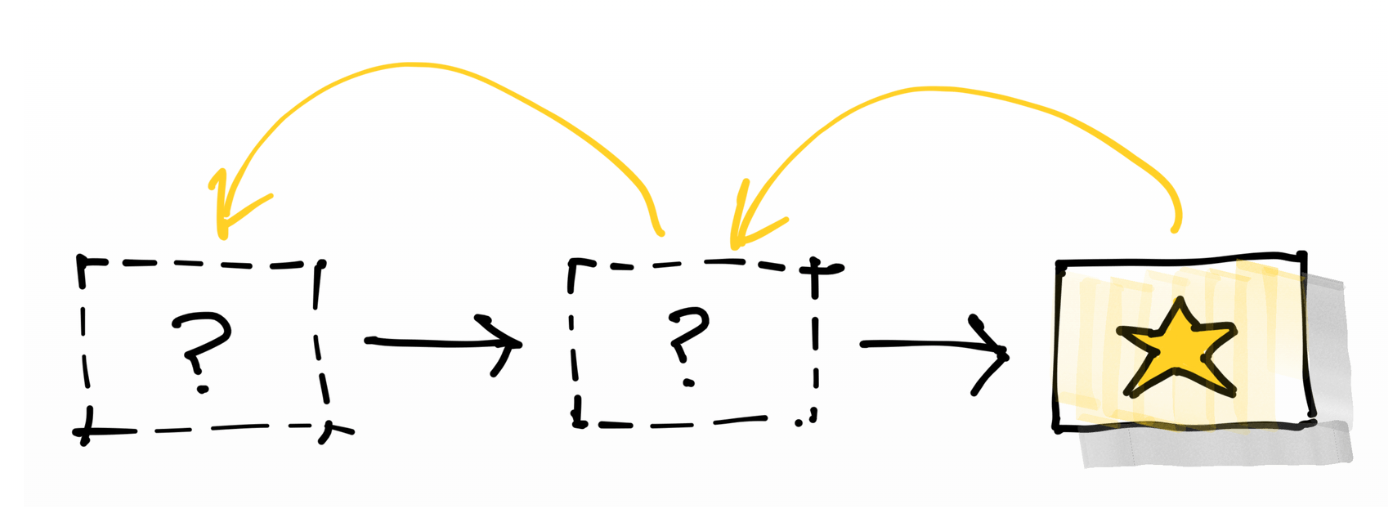

The manufacturers generating real ROI from AI right now started with a specific problem, implemented a targeted solution, measured the result, and built from there. The infrastructure followed the value, rather than preceding it.

Digital transformation, AI transformation, IoT 5.0 — the terminology will keep changing. The underlying question stays the same: where does the business value actually come from, and when do we start generating it?

The answer isn't after the lake is built. It's now, on the problem you can name, with a solution scoped to solve it.